Computing Correlators

[A more technical post follows]

My most recent paper, out on the arXiv today, is very exciting to me because it seems to be a genuinely new way of computing some important quantities and it is devilishly simple. So simple that I worried for months that it is all super-obvious to everyone. But another voice within me said to myself: Well if it is so obvious, why has nobody published it? Another (paranoid) voice within said: Maybe someone has published this method, and I just can’t find it in the literature…

Well, I decided that the best way to find out for sure is to put it on the arXiv and within a short time someone will email to say that I missed their important work. So, while I wait for that email (as I start writing it’s only been 30 minutes since it has been “out there”, so there’s time), let me say a few things about why I like the many results in the paper.

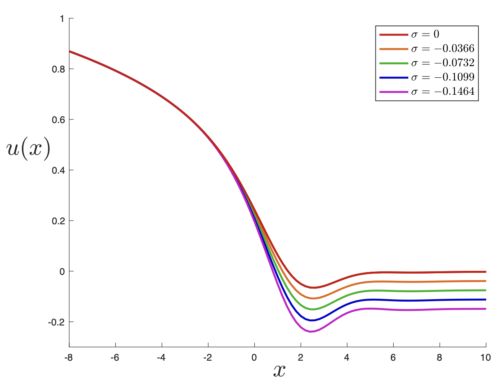

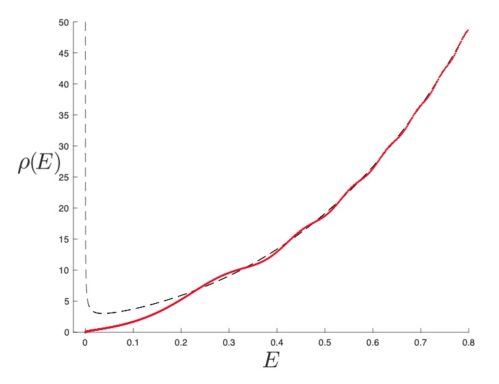

I was already pleased enough with the core part of the paper that I was going to write a swift four-pager about it back in February. The core point being that I figured out how to build on work I’d done in a paper back in 2024 (expanded on with followup work I did with Wasif Ahmed and Krishan Saraswat, a student and postoc). Back in 2024, I found (here) a really nice way (almost miraculous in how it worked) of writing all the corrections to the spectral density of a class of models in terms of one function [latex]u_0[/latex] and its derivatives. It was obtainable from one simple ordinary differential equation (ODE) called the Gel’fand-Dikii equation, which takes in the function [latex]u_0(x)[/latex] as input. The ODE is for a special quantity called the diagonal resolvent [latex]{\widehat R}(x,E)[/latex]. You integrate that quantity [latex]\widehat R(x,E)[/latex] with respect to [latex]x[/latex] and you’re more or less home. In general, it is a messy quantity that does not integrate to anything nice. But just when the function [latex]u(x)[/latex] obeys the “string equation” it is supposed to (as dictated by the governing model’s physics), then [latex]{\widehat R}(x,E)[/latex] is a total derivative (a seeming miracle-see later), and the corrections it gives to the density become of just the right form!

Those corrections can be called [latex]W_{g,1}(E)[/latex] where the [latex]g[/latex] is the order in perturbation theory. [latex]g=0[/latex] is leading order, [latex]g=1[/latex] is the torus, [latex]g=2[/latex] the double torus, etc. Indeed [latex]g[/latex] is the number of handles or “genus” of an associated Riemann surface. The one subscript on the other hand, corresponds to the one energy entry available when just discussing the density [latex]\rho(E)[/latex]. All the [latex]W_{g,1}[/latex] end up being written nicely in terms of a function [latex]u_0(x)[/latex] and its derivatives, evaluated at a special point.

An already nice feature (among many) of the construction was that this one ODE, recursively solved, gave rise to the [latex]W_{g,1}[/latex] of many different problems across a range, including certain random matrix models, gravity problems, intersection theory and topology, and so on. All you need to do is change the function [latex]u_0(x)[/latex]. Moreover, for this (wide) class of problems, you can compute the desired results faster and with way less machninery than other methods, such as topological recursion, which was an interesting observation. This includes very famous problems like the Weil-Petersson volumes (of the compactified moduli space [latex]\overline{\cal M}_{g,1}[/latex] of Riemann surfaces with genus [latex]g[/latex] and [latex]n=1[/latex] boundaries) and generalisations. Another nice feature is that you also get non-perturbative data beyond the genus expansion, an aspect I explored recently (in this paper) with student Joao Rodrigues, and expert in resurgence techniques.

The core breakthrough of the new paper is this: For some time, I’ve wondered how to compute correlators for more energies (amounting to multi-point correlators of [latex]\rho[/latex]) in this same way: […] Click to continue reading this post

The core breakthrough of the new paper is this: For some time, I’ve wondered how to compute correlators for more energies (amounting to multi-point correlators of [latex]\rho[/latex]) in this same way: […] Click to continue reading this post